Open ScienceREFSQ 2026

Open Science is a movement that seeks to make scientific research more transparent, rigorous, accessible, and reproducible. This increases the accountability of those contributing the research, but also their credit for opening up scientific work. Open science aims to make research more open to participation, review/refutation, improvement, and (re-)use for the community to benefit through making research material available and reusable.

Like REFSQ’24 and REFSQ’25, the Open Science Track of REFSQ’26 encourages and supports authors in making their research and artifacts more accessible, reproducible, and verifiable. Upon submission of the camera-ready versions of the papers, the Open Science Co-Chairs assess the state of Open Science of submissions to REFSQ’26.

In addition to continuing and expanding the existing Open Science Policy, which all authors are encouraged to adhere to, REFSQ’26 and the Open Research Knowledge Graph (ORKG) organize the second Open Science Competition with two challenges to contribute to the promotion of Open Science in Requirements Engineering.

All authors are invited to take up these challenges. Fame, honor, ORKG Awards, and prize money await you.

Open Science Competition

As at REFSQ’25, The Open Science Track of REFSQ’26 and the Open Research Knowledge Graph (ORKG) organize the second Open Science Competition to encourage and support authors to promote Open Science in Requirements Engineering. This competition aligns with the broader movement of Open Science and the Open Science Policy of REFSQ, which aims to make scientific research more accessible, reproducible, and verifiable. This competition is an opportunity for the research community to showcase its commitment to Open Science and its promotion in Requirements Engineering by making scientific knowledge FAIR - Findable, Accessible, Interoperable, and Reusable. It is a call to action for researchers to adopt practices that will shape the future of scientific publishing and communication.

The competition will be comprised of two challenges that will ask the authors to make their contributions to research knowledge more explicit with the help of the Open Research Knowledge Graph (ORKG). The best submission in each challenge receives an ORKG award, including prize money. The Open Science Co-Chairs will involve other reviewers to determine the winners.

For further information, questions, or help regarding the Open Science Competition, please contact Tobias Hey (hey@kit.edu) and Katharina Großer (grosser@uni-koblenz.de)

Challenge 1: Annotate your paper with SciKGTeX to describe its research contribution.

The accepted paper that is best annotated with SciKGTeX will be awarded the Best ORKG Annotation Award, including prize money of 100€.

SciKGTeX is a LaTeX package that allows authors to semantically annotated the scientific contribution of their research in the LaTeX document at the time of writing. These annotations are embedded into the PDF’s metadata in a structured XMP format, which can be harvested by search engines and knowledge graphs. This process does not only simplify the contribution to research knowledge graphs but also promotes the practice of semantic representations in scientific communication and thus Open Science.

You can find the LaTeX package and more information at the following links:

- GitHub Repository of the LaTeX package with detailed documentation and examples

- Guiding materials for using SciKGTeX from the RE‘25 tutorial on Open Science

- Important slide deck: 1. Slides –> “4. RE25 Tutorial - Using SciKGTeX.pdf”

- Example project: 2. Exercise Materials –> 2.1 SciKGTeX Materials –> SciKGTeX_Solution

Task

The first challenge of the Open Science Competition asks authors to annotate their papers using the five default annotations of SciKGTeX, as well as the additional annotation for the open science challenge, if applicable. Comprehensive information on how to use the annotations can be found in the materials provided above.

Remark: Experiments have shown that annotating a paper with SciKGTeX takes an average of just 7.5 minutes, with a range of between 5 - 10 minutes.

Default annotations:

\researchproblem{“Your annotated text describing the research problem”}

\objective{“Your annotated text describing the research objective(s)”}

\method{“Your annotated text describing the research method(s)”}

\result{“Your annotated text describing the research result(s)”}

\conclusion{“Your annotated text describing the research conclusion(s)”}

Additional Open Science Competition annotations:

Paper category annotations:

\contribution*{paper class}{“The category of the paper according to the call for papers, i.e. technical design paper, scientific evaluation paper, experience report paper, vision paper, research preview”}

\contribution{evaluation method}{“The type of evaluation method used, if not already covered by the method annotation”}

- For further information on the paper categories see the Call for Papers.

- For examples of potential evaluation methods see Evaluation Method.

Artifact annotations:

\contribution{replication package}{“Your URL to the replication package”}

\contribution{code repository}{“Your URL to the code repository”}

\contribution{dataset}{“Your URL to the dataset”}

- To adhere to REFSQ’s Open Science Policy make sure that your artifacts are under an appropriate open license.

Threats to Validity annotations:

\contribution{internal validity}{“Your annotated text describing a threat to internal validity”}

\contribution{external validity}{“Your annotated text describing a threat to external validity”}

\contribution{construct validity}{“Your annotated text describing a threat to construct validity”}

\contribution{conclusion validity}{“Your annotated text describing a threat to conclusion validity”}

...

- Feel free to add any other type of threat to validity in the same schema

- Note that you can have multiple annotations of the same type

Evaluation criteria

Submissions will be evaluated by the following criteria:

- Correctness of the annotated information (in respect to the manuscript)

- Completeness of the annotations (bonus points for relevant, additional annotations)

- Conciseness of the information in the annotations

Participation

For participation in Challenge 1, authors must submit their annotated paper in EasyChair and indicate whether their paper is annotated with SciKGTeX during the submission process - we will have a specific optional field in the EasyChair submission form.

Challenge 2: Enrich your paper with an ORKG comparison to provide an overview of the state of the art for your particular research domain, question, or problem.

The accepted paper that is enriched with the best ORKG comparison will be awarded the Best ORKG Comparison Award, including prize money of 200€.

The ORKG is a ready-to-use and sustainably operated platform with infrastructure and services that aims to open scientific knowledge and improve its FAIRness - Findability, Accessibility, Interoperability, and Reusability. Researchers can use the ORKG to systematically describe and organize research papers and their contributions. ORKG Comparisons are a specific feature of the ORKG that allows researchers to organize research contributions in a tabular view, enabling easy comparison and filtering along different properties. This kind of representation facilitates a more efficient review of the state-of-the-art for specific research domains, questions, or problems, thereby enhancing the rigor and accountability of scientific inquiries. However, ORKG comparisons are not just auxiliary tools. They are recognized as scholarly outputs in their own right, complete with Digital Object Identifiers (DOIs) for easy citation. This feature underscores the ORKG’s potential to enhance the visibility and credibility of research contributions.

You can find more information on how to accompany your paper with an ORKG Comparison at the following links:

- ORKG help center: Accompany your paper with an ORKG Comparison

- Examples of ORKG Comparisons with corresponding publications from the RE‘25 tutorial on Open Science

- Important slide deck:

- Slides -> “5. RE25 Tutorial - Using the ORKG.pdf”

- Guiding materials for using the ORKG, including the creation and publication of an ORKG Comparison, from the RE‘25 tutorial on Open Science. There are two options to create an comparison:

- Manual creation. Important slide deck:

- Slides -> “5. RE25 Tutorial - Using the ORKG.pdf”

- Using ORKG Ask and ORKG CSV Import. Important slide deck:

- Slides -> “6. RE25 Tutorial - Using the ORKG Ask and ORKG CSV Import.pdf”

- Instruction and demonstration videos on how to create an ORKG Comparison and features of the ORKG interface

Task

The second challenge of the Open Science Competition asks authors to create, publish, and cite an ORKG Comparison in their paper. The ORKG comparison must provide an overview on the state of the art for the particular research domain, question, or problem of the corresponding paper. A comparison is particularly applicable when you systematically compare studies, software/tools, or approaches. Comprehensive information on how to create, publish, and cite an ORKG Comparison can be found in the materials provided above.

Evaluation criteria

Submissions will be evaluated by the following criteria:

- Expressiveness of (semantic) attributes by which elements are compared

- Level-of-detail and complexity of the attributes

- Exhaustiveness of the compared elements

Participation

For participation in Challenge 2, authors must submit their paper that cites their published ORKG Comparison in EasyChair and provide the link to their published ORKG Comparison during the submission process - we will have a specific optional field in the EasyChair submission form.

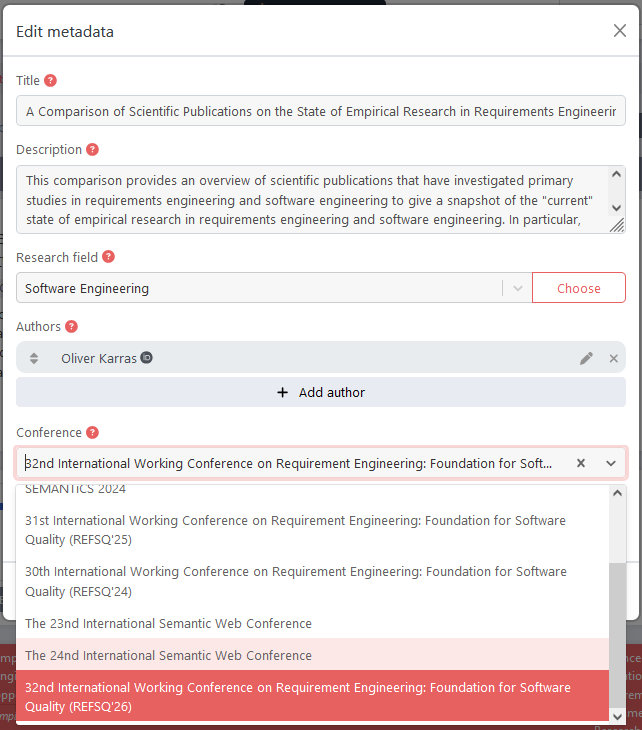

When authors publish their ORKG Comparison, they must assign a DOI to make it persistent and citable and associate the ORKG Comparison with the REFSQ‘26 conference by selecting “32nd International Working Conference on Requirement Engineering: Foundation for Software Quality (REFSQ’26)” from the corresponding drop-down menu, compare the screenshot below.

Open Science Policy

We expect authors to include an explicit Data Availability Statement at the end of their paper (similar to acknowledgments). Specifically, authors should provide details about any material disclosed alongside their submission such as data, or code, and other relevant material. Otherwise, the authors should justify the reasons why disclosure is not possible (e.g., due to IP agreements).

We strongly encourage authors to read and apply the following best practices to contribute to Open Science:

Upon submission:

-

If you have additional material (an artefact) associated with your submission, like data, code, and evaluation material, follow the Open Science - Artefact Management Guideline. In particular:

- Adequately document the artefact to allow for, to the best extent possible, replicability, reproducibility, and reusability. See Document the Material.

-

Establish a good intent by making your material available on a persistent repository with a public link, e.g., archiving your replication package on Zenodo or FigShare, which generates a permanent DOI for your material. The DOI should be referenced in the artifact and also in the paper. Note: On both Zenodo and FigShare it is possible to prepare your repository in a way that it does not disclose data for double-blind review. See this guide.

-

Annotate your paper with SciKGTeX to describe its research contribution and thus participate in the first challenge of the Open Science Competition.

- Enrich your publication with an ORKG Comparison, if applicable, e.g., to provide an overview of the state of the art for your particular research domain, question, or problem and thus participate in the second challenge of the Open Science Competition.

-

Indicate in your EasyChair submission if you annotated your paper with SciKGTeX and provide the link to your published ORKG comparison if you created one.

Upon acceptance:

- Attribute an appropriate open licence to the shared material and make sure you share this licence together with the material under the same folder in a LICENSE.md file.

- Upload your annotated paper to the ORKG in less than 90 seconds to increase its visibility: Youtube - Import of SciKGTeX annotated PDF into the ORKG.

Document the Material

- Prepare a README.md file that lists precisely what is being shared and how to use and re-use the research material. E.g., by summarizing the following:

- “Summary of Artefact” – Describe what the artefact does, the expected inputs and outputs, and the motivation for using it.

- “Description of Artefact” - Describe the structure of the artefact, e.g., all files included.

- “Authors Information” – List all authors and how to cite the use of this artefact.

- “Artefact Location” – Describe at which URL (or DOI) the artefact can be obtained.

- Introduce a running example that helps reviewers and the community validate and reuse the shared material.

- If you are sharing code, think thoroughly about the requirements for running the code and how to facilitate this process (e.g., use virtual environments such as Docker where possible). It should be exercisable, complete, and include appropriate evidence of verification. Provide the following in the README.md or consider a separate INSTALL.md:

- “System Requirements” – state the required system, programs, and libraries needed to successfully run the artefact.

- “Installation Instructions” – explain in detail how to run the artefact from scratch.

- “Steps to Reproduce” – explain in detail what needs to be done to produce the same data, figures, and tables as presented in your submission.

Further reading:

-

Guiding materials for using SciKGTeX and ORKG Comparisons:

O. Karras, L. John, A. Ferrari, D. Fucci, D. Dell’Anna: Supplementary Materials of the Tutorial: “Promotion of Open Science in Requirements Engineering: Leveraging the ORKG and ORKG Ask for FAIR Scientific Information” (2.0), Zenodo, Link

-

General introduction into Open Science in Software Engineering and generally recommended practices:

D. Mendez, D. Graziotin, S. Wagner, H. Seibold: Open Science in Software Engineering, In: M. Felderer, G.-H. Travassos (eds.) Contemporary Empirical Methods in Software Engineering, Springer, 2020. Link

-

Open Science - Artefact Management Guideline:

J. Frattini, L. Montgomery, D. Fucci, M. Unterkalmsteiner, D. Mendez, J. Fischbach: Requirements Quality Research Artifacts: Recovery, Analysis, and Management Guideline, Journal of Systems and Software, 2024. Link